|

||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|

|||

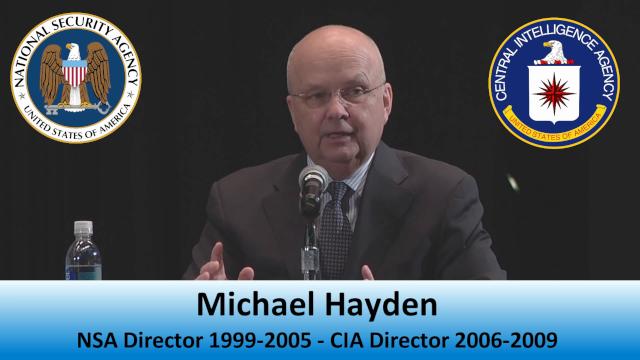

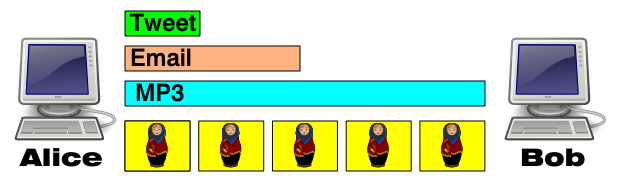

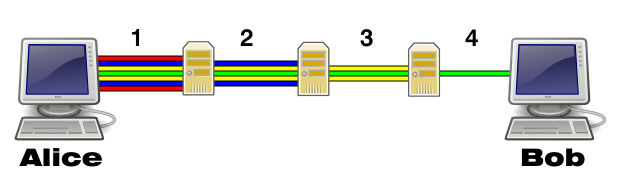

There is a common refrain that he web can never be secure. Support for this comes whenever someone is caught or a flaw is found in software or an algorithm. The controversies revolving around the TOR Project in late 2014 seems to give support to this idea that security is impossible. We must separate fundamental concepts from flawed implementations. We also need to take a serious look at TOR, and without emotional bias, consider if a serious flaw was designed in. The basic concepts, like the public key cryptography, are sound and can be relied on. Implementations are sometimes flawed, such as the breaking of the MD5 hash algorithm. However, even with that, for 99.999% of the users of MD5, it too remains sound. The vast majority of all security compromises occur because of secondary issues; from the password on the post-it below the keyboard, to viruses that fool people rather than break security. Around 2011 I started working seriously on technology to secure the web that would later be named Matryoshka, since like the onion, the Russian nested dolls make a good visual analogy. In many respects Matryoshka and Onion Routing are almost identical. TOR is an acronym for The Onion Router. Since my background in communications and cryptography dates back to the 1970s, the basic concepts are familiar, as are how to break them. These are understood by those in the field. Traffic Analysis With Matryoshka, the first hole to plug was for traffic analysis. There are multiple simple things that are easily understood and give clues to who is communicating with whom, when and where. This is the metadata, which has now become a term in general conversation. Metadata includes not only IP addresses, but locations, times, and very importantly – message length. If you are observing messages going between Alice and Bob, but have no idea about the content because of encryption, you can still determine a lot of information. A simple example might be if you see a 140 byte message you might assume it is a tweet. If it is about 5K bytes in length, you might assume an email. If it is 3M bytes it could be a MP3 song.  However, there is an obvious and well understood way to hide this metadata. Alice will send to Bob a continuous stream of fixed length messages. Since they are encrypted, the content is secure. Since they are continuous, observers have no idea when one is real or when it is simply random garbage. Since they are the same size, observers have no idea what kind of content is being sent. This is a simple and complete solution for traffic analysis and nothing new. This may not seem important, but it is the basis of the hole in TOR. Onion Routing or the Matryoshka Model The second thing to hide is where the message is coming from and going to. To do this, Alice’s computer encrypts the message for Bob, then re-encrypts the message for a computer before Bob, and does this repeatedly for numerous computers, and finally sends the message. Each computer on the route decrypts the message one layer and sends it on until Bob finally gets the message. This is like pealing an onion or separating Matryoshka dolls.  While there are numerous other details, with this general idea any given computer on the route will not know where the message originated from or where the final destination is. Therefore, even if a nefarious party is running one or more of these computers, they cannot tell anything about the traffic, nor can they inject anything into it. It should be noted that many forms of encryption can be broken with enough computing power. However this is done by having some idea of what valid decrypted data looks like. When you decrypt already encrypted data there are no characteristics to see that will indicate the decryption is broken. This makes the breaking of the key a bit more difficult. Perhaps somewhat akin to an unaided man throwing a rock and hitting Mars. Give it a try. The origin of the TOR Project Let’s say you are an intelligence asset in a foreign country and need to communicate with your handlers in the United States. You can well assume that picking up a phone and calling is not a good idea. Email is as bad or worse. You need to have a way to communicate that will not draw any attention to you. This scenario is real and is the reason the TOR Project exists. It is well documented and not denied how the US Intelligence Community, spearheaded by the US Navy, funded and built the TOR Project with the purpose of hiding communications for intelligence assets. It was clear at the outset that if their agents were the only ones using the technology, they would stand out as much as if they were communicating insecurely. They decided to make the project open and free so that anyone could use it, but they would fund it and they still do. TOR has been used by millions around the world to hide traffic that ranges from innocuous to abhorrent. Mixed in those shopping list reminders, searches for a new job, study of an issues that may be politically incorrect, or even political descent, are those intelligence assets reporting home, as well as the vilest of the vile doing vile things. Being able to privately tell your wife that you love her is a good thing, and we don’t ban the technology to do so when some use the same means to speak with a mistress. Monopolistic Practices The United States has numerous antitrust laws to prohibit monopolistic practices. This was to prevent a well-funded apple grower from undercutting the price of another to drive them out of business. We see this practice happening constantly on the Internet without restraint. The obvious example is free email services. These services are not free to the provider, but do make it difficult for competitors who charge for their services to compete. Apply this model to TOR. How do you compete with a free service? We don’t question why it is free, we just use it because it is free. TOR has garnered a vast market, but receives no profit from it. Or perhaps they do? Continuing with the email example – Gmail appears free to the user, but in reality the user is the product. Google uses your information to essentially sell you to advertisers and others. Remember they walk away with about $2 billion per month in advertising profits. Their product is you. Their perceived benevolence comes from selling you. Gmail users are willing victims since the service is free and appears benign. Google makes you feel secure by using a secure connection (HTTPS) to your Gmail account. This reduces the risk of a third party seeing the content of the traffic between you and Google, but it is Google who is the reader, tracker and exploiter of your email. You are handing them all the content and metadata. What have you gained with a secure link besides a warm fuzzy feeling and perhaps a need to be careful what you say? Apply this same idea to TOR and perhaps you have a product that gives the appearance to the user that they are secure. However, they are secure to all but the product provider. Now we need to see how this can be done. The hole in TOR Unlike the Matryoshka Model, TOR does expose packet length to the observers at the end points. Consider this on a small scale. If you have the ability to see the data from a dozen computers, you could determine when one computer is sending to another even though you cannot get the data because of encryption. If you see a 456 byte message sent from computer A and a moment later the same or similar size message arrive at computer B you could draw an obvious conclusion. However, it is a much bigger task to be able to observe the traffic from millions upon millions of computers around the world at the same time. Who would have the ability to do that? The answer is the Five Eyes – the five nations that are part of the UKUSA Agreement formed during World War II. These are the same that fund the TOR Project. The combined power of the Government Communications Headquarters (GCHQ), the National Security Agency (NSA), the Australian Signals Directorate (ASD), the Communications Security Establishment Canada (CSEC), and the Government Communications Security Bureau (GCSB) is sufficient to determine who is communicating with whom all the time and from any point on the globe. Was this the plan? This now ignites the question if the hole in TOR is on purpose. Traffic analysis is the first hole plugged by Matryoshka, but ignored by TOR. It is a possibility that those that had the forethought to give away TOR so their assets would be hidden in the crowd, also had the foresight to build a system that other parties, including smaller governments, would not have the ability to break, but they would? To play the role of the world hegemon they might publically, repeatedly, and honestly state how they can’t defeat the layered encryption methods used in TOR. Users are lulled into a feeling of confidence that these mavericks of the web have defeated the all-powerful NSA. While for the encryption aspect this is true, for the metadata aspect, they and they alone can defeat it, but won’t mention it. When they have a situation where they are tracking party A and want to know who they are communicating with, they can use traffic analysis of literally all of the computers on the web to connect them to party B. Once the interested parties are identified other techniques can be used to gain access to the respective parties. This might including invasive software or even hardware such as things found in the NSA’s ANT Product Data catalog. Things to consider The TOR Project provides a worthy service, just as Gmail does. The proponents of the TOR Project articulate well the issues involved in security and offer great solutions for those issues, just like Gmail does. The TOR Project seems to adeptly ignore fundamental issues, just like Gmail does. The TOR Project violates the spirit if not the letter of antitrust laws, just like Gmail does. Perhaps a good analogy is if some man offers you a lollypop to get in the car with him, do you go? |

|||

|